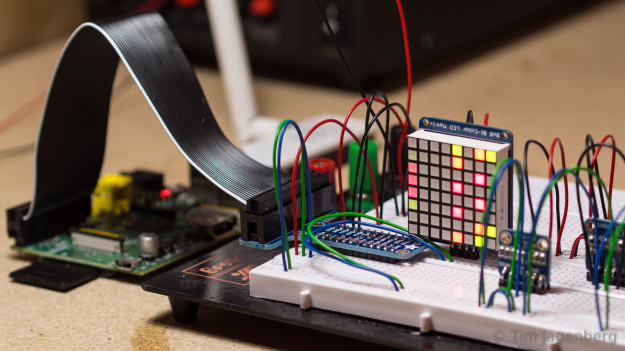

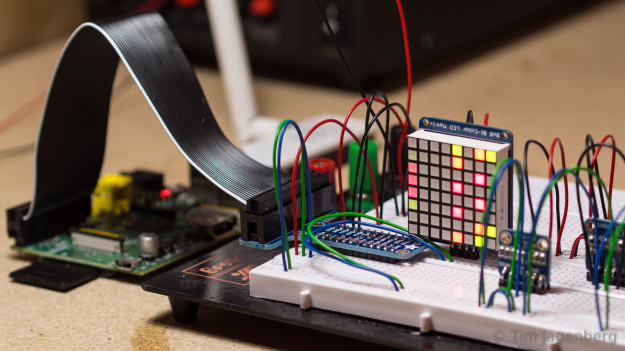

I recently ordered a bunch of I2C breakout boards to tinker around with. The first thing I implemented, using the Pi4J library, was a simple eight by eight version of Conway’s Game of Life using an I2C controlled bicolor LED matrix from Adafruit.

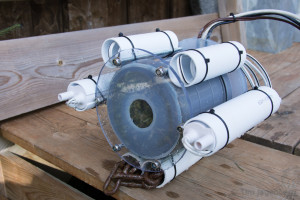

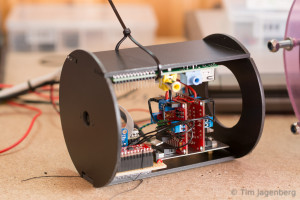

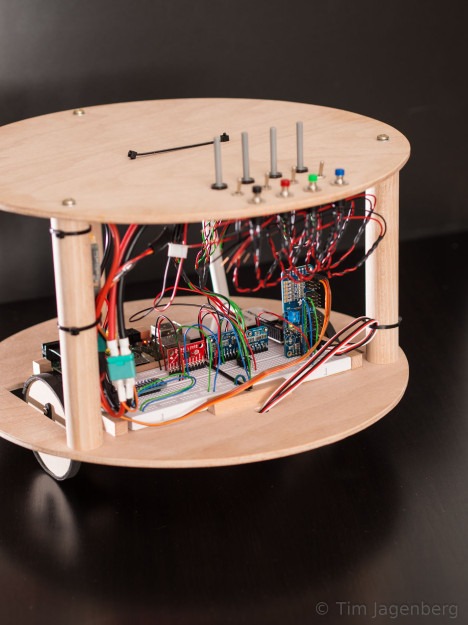

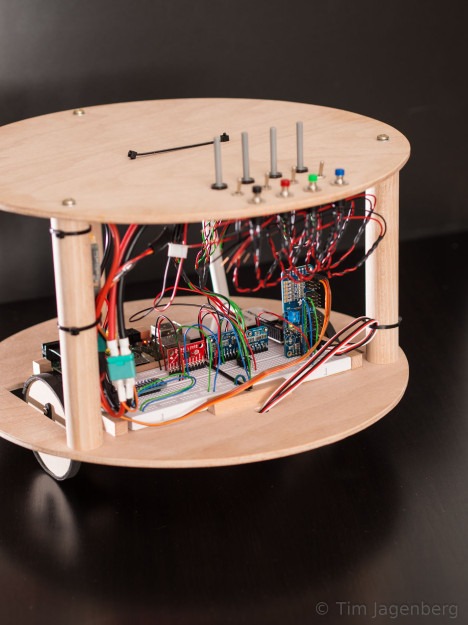

Next I build a simple two-wheeled robotic platform (RPi2C) to test the 9DOF MPU9150 breakout board from Sparfun. This little chip integrates accelerometer, gyroscope, and magnetometer into a single package and also includes what Invensense calls a digital motion processor for on-board sensor fusion.

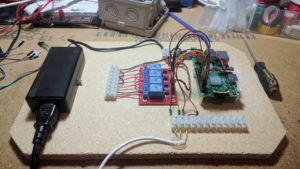

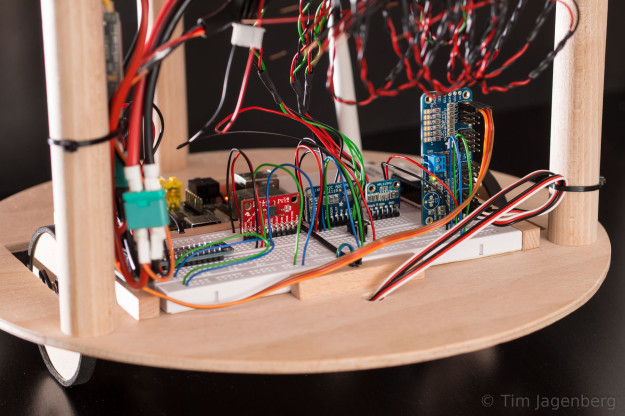

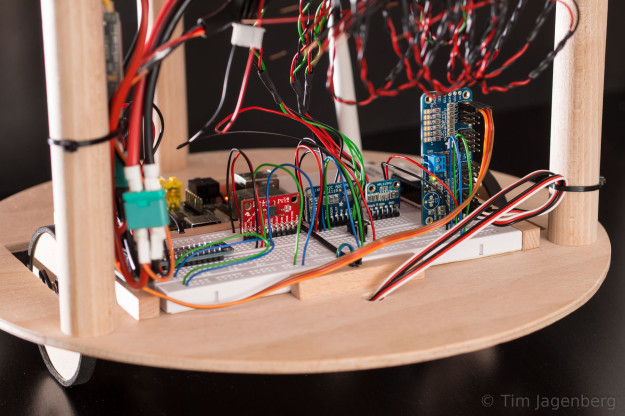

The two wheels are driven by basic Parallax continuous rotation servos and controlled by the same I2C based PCA9685 breakout board from Adafruit I already used on the RaspberryPylot. As the MPU9150 DMP requires uploading of firmware via I2C which I still need to implement, I’m currently simply using the raw gyro data and a PID controller for very basic stabilisation. Also the low rotational speed of the servo motors limits the ability to recover from disturbances. Fusing the sensor data of the accelerometer and the gyro, an improved controller, and maybe stronger motors should deliver better results, soon.

The platform also includes a basic bread-board, a bunch of potentiometers, switches, and buttons, as well as an ADS1115 16-Bit ADC and a SX1509 16 Output I/O Expander for happy tinkering. 😉